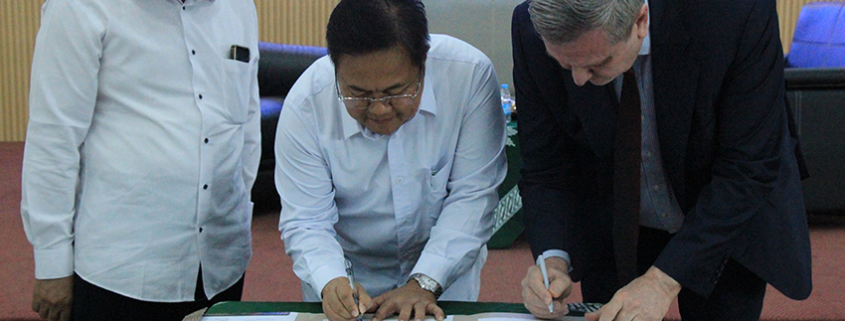

Exciting Updates from Indonesia! MoU Signed with Universitas Negeri Surabaya (UNESA)

On December 19, 2025, we had the privilege of signing a Memorandum of Understanding (MoU) with Universitas Negeri Surabaya (UNESA), officially kicking off a dynamic partnership focused on strengthening academic and technological collaboration.